|

Some the earliest and largest Snowflake wins were really Data Lake use cases. There is a reason why Snowflake no longer calls itself a "Cloud Data Warehouse" because that term is overloaded and can confuse people about the workloads Snowflake can take on. I think you may have a fundamental misunderstanding for the value Snowflake brings to the Data Lake use case. Just the standard stuff we choose to talk about in our free time :) Lots of this thread was inspired by conversations I had with them. Many thanks to Bryan Offutt and Matt Slotnick for indulging me over the weekend to chat about data architectures. I think there will always be uses cases for lakes and warehouses to sit alongside each other (two tiered), and that might end up being the best approach. Of course, there's always the middle ground. Can they move into BI? And do you really need direct SQL access to the raw lake data? What uses cases does direct access to parquet files enable? IMO the idea of centralized storage for all data is organizationally challenging. Challenges? Databricks is so centered around Spark. The combo of delta lake + delta engine (or something like Presto) is a great alternative to the cost of Snowflake. It's much cheaper (especially w/real scale) and you only have to worry about one storage layer (the data lakehouse). Moving on to the Databricks / Lakehouse approach. I'd love to see Snowflake find a way to better separate / charge for cold storage. In it's end state (no lake), it can be very expensive. The downsides? In the interim, managing a two tiered architecture (warehouse + lake) can be challenging (maintaining consistency).

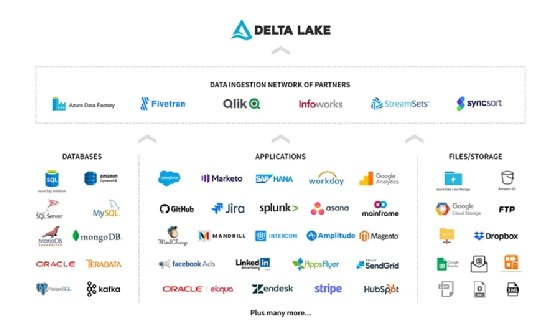

Ultimately it's incredibly performant and powerful. Compute / storage scale independently, and the tools around it (like Fivetran, dbt ) make it easy to get data in and then transformed. What are advantages / disadvantages of both? In summary Databricks will offer a Delta Lake within the lake and then allow SQL access to data for BI (through their Delta Engine), and processing / data prep for data science / ML (through Spark + DataFrame APIs). The reason these tools emerged is to offer direct SQL access to raw / object storage. It's a market we're seeing Dremio and Starburst go after. The SQL access to data lake storage is huge. Above the Delta Lake you can still do the data processing with Spark / DataFrames for ML/data science, and then we'll offer our own query engine (Delta Engine) to allow BI analysts / businesses analysts to access data with SQL (SQL access is important). We'll then provide a managed Delta Lake experience on top. Here's how Databricks see's the world: They say to the customer, keep your data in your own managed lake / object store (S3, ADLS, HDFS, etc). This allows the system to implement management features such as ACID transactions or versioning within the metadata layer, while keeping the bulk of the data in the low-cost object store and allowing clients to directly read objects from this store using a standard file format in most cases.” “a transactional metadata layer on top of the object store that defines which objects are part of a table version. In Databricks’s paper on the Lakehouse architecture they describe it as: And converting from parquet to delta lake is simple. It brings the best functionality of the warehouse into the lake (structured tables, reliability, quality, performance).

IMO Delta Lake is super powerful.ĭelta Lake is a new open source standard for building data lakes.

You might be thinking, "Databricks doesn't have a storage layer?" While that may have been true historically, times are changing with the rise of the Delta Lake technology. Conversely, Databricks has taken the "Lakehouse" approach (data lake at the center, not data warehouse).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed